Published

- 30 min read

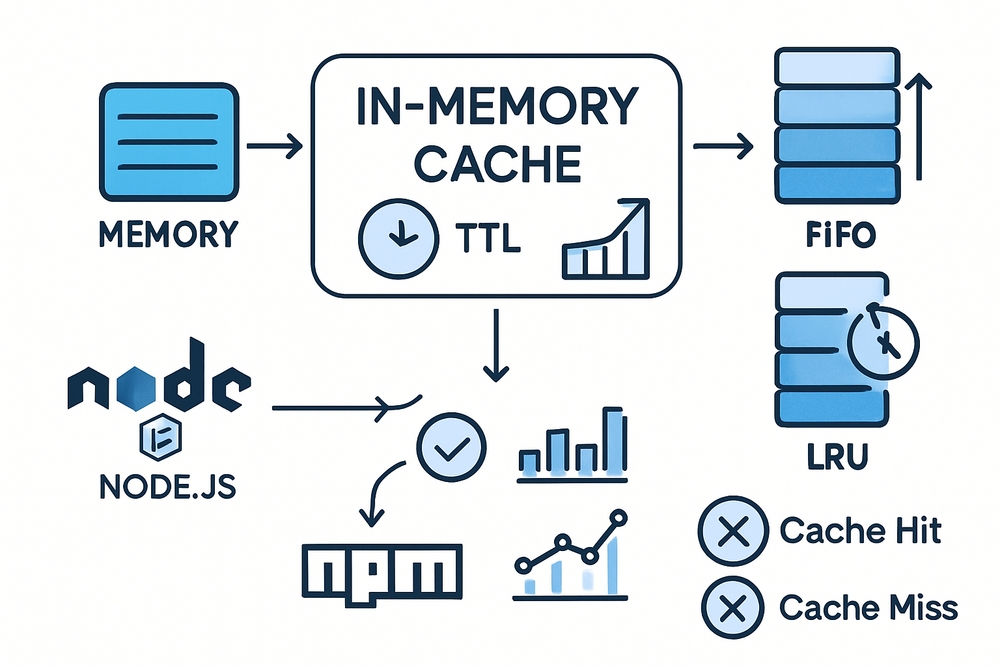

Demystifying Caching in Node.js: A Deep Dive Into My npm Package, Runtime Memory Cache

Demystifying Caching in Node.js: A Deep Dive Into My npm Package, Runtime Memory Cache

Building blazing fast Node.js applications often comes down to how well you handle data retrieval. Caching is the secret weapon for reducing latency, cutting costs, and enhancing user experience.

In this article, I introduce you to my runtime-memory-cache package, a zero-dependency, TypeScript-safe in-memory cache I built, initially for my personal projects but now shared as a lightweight, flexible tool in the Node.js ecosystem.

But beyond usage, I want to unpack why caching matters, how it fundamentally works, and why your cache implementation matters just as much as your code that uses it. We’ll go deep into eviction strategies, TTL, stats, and real-world usage patterns — all aligned strictly with the actual design and code in runtime-memory-cache.

1. What is Caching and Why Is It Important?

Modern web services usually rely on databases and remote APIs to fetch data dynamically. But often these systems respond with delays measured in tens or hundreds of milliseconds, sometimes seconds — a prohibitive latency for many user-facing applications.

The Simple Definition

Caching stores copies of data that were expensive to compute or fetch so that future requests serve those copies directly, reducing cost and latency.

Real-World Analogy

Imagine you always buy coffee from the same coffee shop by ordering through an app. The app fetches your favorite order (with a bunch of customization) from a back-end service. If every time you open the app, it asks the coffee shop’s server for your preferences and recent orders, the latency adds up.

If the app caches your favorite coffee order locally and refreshes it periodically, the app instantly shows you your usual drink without delay.

Caching as a Trade-Off

Caching is a trade-off:

- Benefit: Lower latency, fewer external requests.

- Cost: Consumes memory/storage and risks staleness (outdated data).

Adding caching thoughtfully improves scalability, user experience, and reduces cost. Getting it wrong leads to stale data, bugs, or memory exhaustion.

1.1 The Cost of Fetching Data Repeatedly

Modern backend systems may have multiple layers — DB queries, service calls, and external API calls. For frequently requested data like user profiles or product info, fetching repeatedly wastes resources.

Example: Retrieving a product catalog item may take 50ms from DB. Handling 1,000 such requests per second continuously adds 50 seconds worth of compute time per second — unsustainable at scale.

1.2 Caching Speeds Things Up

Bringing those 1,000 requests in-memory reduces that cost drastically — memory is accessed in nanoseconds or microseconds. You can serve cached responses immediately instead of querying backend systems repeatedly.

1.3 Common Types of Caches

| Type | Description | Data Location | Example Use Cases |

|---|---|---|---|

| Browser Cache | Local cache on user browser | Client (browser) | Static assets, API responses |

| CDN Cache | Distributed cache network | Edge servers | Static files, global content |

| Disk Cache | Persistent local cache | App server’s disk | Intermediate storage, logs |

| Distributed Cache | Shared cache across systems | External service (redis/memcached) | Session state, rate limiting counters |

| Runtime/In-Memory Cache | Local cache within your app process memory | Node.js process memory | Session tokens, hot data caching |

Our focus is the last: runtime in-memory cache — ultra-fast, lightweight, but ephemeral and process-local.

1.4 Why Runtime (In-Memory) Caches?

Your runtime cache lives inside your app process’s RAM. It’s:

- Fastest: No network or disk call.

- Scoped: Isolated per Node.js instance.

- Temporary: Data gone on restart.

Great for:

- Session tokens

- Rate limiting counters

- Hot DB/API query results

Not suited for:

- Data consistency across multiple servers

- Data durability after restart

You might ask:

How do I cache? Should I write my own?

That’s why I built runtime-memory-cache — a zero dependencies, type-safe, minimal, yet powerful JS cache suited for Node.js apps, with TTL expirations, eviction policies (FIFO/LRU), and stats — built exactly for runtime caching needs.

2. Understanding Eviction Policies: FIFO, LRU, and Beyond

When using any in-memory cache, eviction policies are critical. They define which cache entries get removed when the cache reaches its maximum size or runs out of capacity. Because RAM is limited, caches cannot grow indefinitely, so knowing how data gets evicted helps you design caches that keep the most important data as long as possible.

2.1 Why Eviction Is Necessary

Imagine your cache can only hold 1,000 entries. When you add the 1,001st entry, the cache must evict (discard) one existing entry to maintain its size constraints. Without eviction, memory usage would continue climbing until your app crashes or slows drastically.

Smart eviction policies help maintain cache effectiveness by balancing between expiring stale data and retaining frequently or recently used items.

2.2 Key Eviction Policies

Here are some of the most common eviction strategies you’ll encounter:

FIFO — First-In, First-Out

- FIFO evicts the cache entry that was added earliest—the one that’s been in the cache the longest.

- Think of it like a queue: first in, first out.

- It’s simple, predictable, and easy to implement.

- However, FIFO doesn’t consider the value or usage frequency of entries—an item frequently accessed early can still be evicted just because it’s old.

- Good for workloads where access patterns are uniform or time order matters.

LRU — Least Recently Used

- LRU evicts the entry that was accessed the longest time ago.

- The idea is that data used recently is more likely to be used again soon, so it should stay in the cache longer.

- LRU typically leads to better hit rates in real-world applications because it adapts to temporal locality of references.

- Implementing LRU requires tracking when entries were last used, which can add complexity and overhead.

- Suitable for session stores, caches for queries, API responses, or UI state.

LFU — Least Frequently Used

- LFU evicts the element with the fewest accesses over time.

- This policy favors keeping the most “popular” or “hot” entries, which might be accessed many times over longer periods.

- LFU is more sophisticated than LRU and can perform better when access frequency is a stronger predictor of usefulness than recency.

- Implementation is more complex, involving counters and often requires approximate or amortized methods for performance.

- Favored in recommendation engines, analytics, or places with highly skewed hot keys.

Other Eviction Policies

- Random Eviction: Remove a random cache entry when space is needed. Very simple, but generally less effective.

- TTL-Based Eviction: Entries are evicted based on a time-to-live expiration rather than usage patterns.

- ARC (Adaptive Replacement Cache): A hybrid strategy combining recency and frequency for more finely tuned eviction.

2.3 Choosing the Right Eviction Policy

Picking an eviction policy depends on your application’s data and access patterns:

| Policy | Description | When to Use |

|---|---|---|

| FIFO | Evict oldest first | Workloads with uniform or time-based data aging |

| LRU | Evict least recently used | Widely accessed data with temporal locality (e.g., sessions, UI caches) |

| LFU | Evict least frequently used | When “popularity” drives access patterns, like analytics or trending data |

| Random | Evict random entry | Simple, low overhead; rarely ideal |

| TTL | Evict after time expiration | When freshness is more important than usage |

Understanding your access patterns will guide you to the eviction strategy that yields the best cache hit rate and resource usage.

2.4 Eviction Policy Tradeoffs

| Policy | Advantages | Considerations |

|---|---|---|

| FIFO | Simple and fast to implement | May evict frequently accessed data prematurely |

| LRU | Adapts to changing access patterns | Slightly more complex; must track usage order |

| LFU | Keeps popular data longer | More overhead; counters required |

| Random | Minimal overhead | Potentially worse hit rates |

| TTL | Ensures fresh data | May cause premature eviction of still-useful data |

2.5 The Future of Eviction Strategies

While FIFO and LRU are the most common and widely understood, the field of cache eviction policies continues evolving. Hybrid and adaptive strategies aim to combine the strengths of several policies, adjusting dynamically to workload shifts.

If you’re building or choosing a cache for your Node.js application, knowing these policies helps you align cache behavior with your performance goals and user expectations.

3. Understanding TTL (Time-To-Live) and Expiry in Caching

When implementing caching, it’s not enough to just store data; you also need to decide how long to keep each cached entry. That’s where TTL — or Time-To-Live — comes into play.

TTL is a simple but powerful concept that tells your cache how long a value should remain valid before it expires and is removed. In this section, I’ll explain why TTL matters, how it works, and some practical considerations to help you tune cache freshness and effectiveness.

3.1 What is TTL?

Time-To-Live (TTL) is the duration, typically specified in milliseconds or seconds, for which a cache entry is considered fresh and valid. Once the TTL expires, the cache entry becomes stale and should be removed or refreshed.

TTL limits how long your cache holds onto data, preventing stale or obsolete information from lingering indefinitely.

3.2 Why TTL is Important

- Ensures data freshness: Without TTL, once data is cached, it could remain forever—even if the original source changes.

- Prevents memory bloat: By expiring entries regularly, TTL keeps cache size manageable and prevents accumulation of outdated data.

- Facilitates cache consistency: Even if you don’t track every source update, TTL guarantees eventual expiration.

- Improves user experience: Serving data that’s too old can confuse users or cause bugs; TTL balances speed with accuracy.

3.3 How TTL Works in Practice

Most caches, including runtime in-memory caches, implement TTL in one of two fundamental ways:

Lazy Expiry

- Cache entries store their expiry timestamp (time added + TTL).

- When the entry is accessed (via

getorhas), the cache checks if the current time exceeds expiry. - If expired, the entry is removed at that moment.

- Otherwise, the cached value is returned.

Pros:

- Minimal overhead; expiry only checked on access.

- No need for continuous cleanup tasks.

Cons:

- Expired entries that are never queried remain in the cache, occupying memory until cleanup.

Proactive/Eager Cleanup

- A background task (e.g., periodic timer, cron job) scans the cache and removes expired entries.

- This prevents buildup of stale entries left unaccessed for a long time.

- Particularly useful in caches where entries are rarely queried after insertion.

Hybrid Approach:

Many caches (including mine) combine both:

- Lazy expiry during normal access operations.

- Manual or scheduled

cleanup()functions to purge expired entries proactively.

3.4 Choosing a TTL Value

TTL isn’t one-size-fits-all; it depends heavily on:

- Data volatility: How often does the underlying data actually change?

- Tolerance for staleness: Can your app serve slightly outdated info gracefully?

- Performance needs: Longer TTL means fewer backend hits, but increased risk of stale data.

- Memory constraints: Short TTL requires more frequent backend fetches but keeps cache lean.

Example use cases:

| Use Case | Suggested TTL |

|---|---|

| Session tokens | Minutes to hours |

| User permissions | Seconds to minutes |

| API responses (static) | Hours to days |

| Real-time stock prices | Seconds or less |

3.5 TTL Granularity: Global vs Per-Entry

Many caches choose a global TTL for simplicity — all entries expire after the same duration.

But a more flexible design allows per-entry TTL during insertion:

- Customize expiry depending on the type or freshness of data.

- Example: Cache user session with longer TTL but cache API results with shorter TTL.

Per-entry TTL adds complexity but improves cache efficiency by tailoring data freshness.

3.6 Handling TTL in Your Cache

Here is a conceptual example in TypeScript demonstrating how TTL can be tracked with each entry:

interface CacheEntry<V> {

value: V;

expiryTimestamp: number; // Unix timestamp in ms when entry expires

}

const cache = new Map<string, CacheEntry<any>>();

// Set cache with TTL

function set(key: string, value: any, ttlMillis: number) {

const expiryTimestamp = Date.now() + ttlMillis;

cache.set(key, { value, expiryTimestamp });

}

// Get cache with expiry check

function get(key: string) {

const entry = cache.get(key);

if (!entry) return undefined;

if (Date.now() > entry.expiryTimestamp) {

cache.delete(key);

return undefined;

}

return entry.value;

}

3.7 Manual Cleanup for Expired Entries

Because lazy expiry only removes entries when accessed, periodically cleaning up expired keys can prevent memory waste:

function cleanup() {

const now = Date.now();

for (const [key, entry] of cache.entries()) {

if (entry.expiryTimestamp <= now) {

cache.delete(key);

}

}

}

Scheduling this cleanup with setInterval or running it at convenient application events keeps memory footprint controlled.

3.8 Impact of TTL on Performance & Consistency

- Too short: You might get excessive cache misses and miss out on caching benefits.

- Too long: Risk of serving stale data or memory overuse.

- No TTL: Data can become dangerously stale and cache size can grow indefinitely.

Finding the right TTL balances performance and accuracy.

That finishes our deep look at TTL and expiry. Next up, we’ll explore how to use your runtime-memory-cache package in practice — through installation, API walkthroughs, and common usage patterns.

4. Getting Started with runtime-memory-cache: Installation and Practical Usage

Now that we’ve covered the key caching concepts like eviction policies and TTLs, it’s time to get hands-on with the package itself. I built runtime-memory-cache to be as simple and intuitive as possible, while still providing flexibility and control.

In this section, I’ll walk you through how to install the package, create a cache instance, and use its core methods to cache data efficiently.

4.1 Installation

Getting started is as easy as running:

npm install runtime-memory-cache

The package is published on npm, fully typed with TypeScript support, and designed for zero dependencies, so it won’t bloat your project or add security concerns.

4.2 Creating a Cache Instance

To create a cache, you import the class and instantiate it with optional configuration options. Here’s a basic example with typical settings:

import RuntimeMemoryCache from 'runtime-memory-cache';

const cache = new RuntimeMemoryCache({

ttl: 60000, // Entries expire 1 minute after being set by default

maxSize: 1000, // Cache holds up to 1,000 entries

evictionPolicy: 'LRU', // Can be 'FIFO' or 'LRU' depending on your preference

enableStats: true // Collect cache hit, miss, and eviction statistics

});

The options above are all optional and have sensible defaults (ttl and maxSize are undefined by default, meaning no expiry or size limit):

| Option | Type | Description |

|---|---|---|

ttl | number | Default Time-to-Live (TTL) in milliseconds for cached items. |

maxSize | number | Maximum number of entries before eviction occurs. |

evictionPolicy | ’FIFO’ | ‘LRU’ | Defines eviction strategy to use when full. |

enableStats | boolean | Set to true to track cache hits, misses, and evictions. |

4.3 Core Methods Explained with Examples

Once you have a cache instance, the API revolves around the familiar key-value store operations — set, get, has, del, and a few utilities for stats and cleanup.

set(key, value, ttl?)

Insert or update a cache entry. Optionally override the configured global TTL for this particular item.

cache.set('user:42', { name: 'Arya Stark', location: 'Winterfell' }); // Uses default TTL

cache.set('session:abc', { token: 'XYZ' }, 120000); // Custom TTL of 2 minutes

If the cache size exceeds maxSize, the cache will evict an entry automatically using your chosen eviction policy.

get(key)

Retrieve a value by its key. Returns undefined if the key is not present or the entry has expired.

const user = cache.get('user:42');

if (user) {

console.log('User found:', user);

} else {

console.log('Cache miss');

}

This method also counts as an access in LRU eviction, updating the recency of the entry.

has(key)

Checks if the key exists and hasn’t expired. Useful for conditionally acting on cache presence.

if (cache.has('session:abc')) {

console.log('Session active');

} else {

console.log('No active session found');

}

del(key)

Removes a specific entry from the cache immediately.

cache.del('user:42'); // Manually invalidate cache

cleanup()

Manually remove all expired entries from the cache. This is helpful if your application doesn’t access these entries frequently, ensuring memory doesn’t hold onto stale data.

cache.cleanup();

You might schedule this periodically for long-running services.

4.4 Viewing Cache Contents

You can introspect cache’s contents and size with:

cache.keys()– returns an iterable of all current keys.cache.size()– returns the number of valid entries currently stored.

Example:

console.log([...cache.keys()]); // Prints all cache keys

console.log('Cache size:', cache.size()); // Shows current number of entries

4.5 Cache Statistics

When you enable enableStats, you can measure how well cache is performing by calling:

const stats = cache.getStats();

console.log(`Hits: ${stats.hits}, Misses: ${stats.misses}, Evictions: ${stats.evictions}`);

Useful statistics include:

| Stat Type | Meaning |

|---|---|

| Hits | Number of successful cache retrievals |

| Misses | Number of lookups that found no entry |

| Evictions | Number of entries removed due to capacity |

You can also reset stats if needed:

cache.resetStats();

4.6 Estimating Memory Usage

To monitor cache’s footprint, you can call:

const memUsage = cache.getMemoryUsage();

console.log(`Approximate memory usage: ${memUsage.estimatedBytes} bytes`);

This estimate is based on stringifying cached entries and summing their sizes, providing an “order of magnitude” rather than exact memory usage.

4.7 Full Example: Simple API Response Caching

Combining these methods, here’s a snippet showing how you can cache API results for better performance:

async function fetchUserData(userId: string) {

const cacheKey = `user:${userId}`;

const cached = cache.get(cacheKey);

if (cached) return cached; // Return cached data if present

// Else fetch from external API or DB

const userData = await fetchFromDatabase(userId);

// Store result in cache, rely on default TTL

cache.set(cacheKey, userData);

return userData;

}

5. How Eviction and TTL Work Under the Hood in runtime-memory-cache

Understanding what happens behind the scenes when you call get, set, or when the cache removes entries automatically is essential for mastering caching strategies and tuning your cache for your application.

In this section, I’ll take you through the mechanics of eviction and TTL management in an in-memory cache, especially as it applies to runtime-memory-cache’s approach.

5.1 Overview: The Challenge of Space & Freshness

Your cache has two main responsibilities:

- Prevent memory overflow: Keep the cache size under the configured

maxSizeby evicting entries thoughtfully. - Ensure data freshness: Remove expired entries either proactively or lazily, based on TTL per entry.

Balancing these goals while maintaining fast, O(1) operations is what a great cache achieves.

5.2 Eviction Mechanism: Handling the Maximum Size

When you insert a new cache entry via set():

- Check capacity:

If the current cache size has reached or exceeded

maxSize, the cache must evict one or more entries before inserting. - Evict an entry based on the eviction policy:

- For FIFO, remove the oldest inserted key.

- For LRU, remove the least recently used key.

- Insert the new entry:

Place the new key at the “most recent” position, either at the end of the

Mapor logically equivalent.

This eviction is done synchronously as part of the set, ensuring the cache size always respects your maxSize.

5.3 TTL Enforcement: Keeping Cache Data Fresh

Each cache entry is tagged with an expiry timestamp, calculated when the entry is created or updated:

expiry = current_time + TTL_for_this_entry

- The TTL can be a global default or overridden per entry via the optional third argument to

set.

Expiry checks happen lazily:

- When you call

get(key)orhas(key):- The cache checks if the current time is past the expiry timestamp.

- If expired, the entry is immediately removed and the request responds as a cache miss (

undefined).

Expired entries may also be cleaned up proactively:

- You can call

cleanup()to scan and remove expired entries regardless of access. - This is useful for reducing memory footprint especially when many entries have expired but remain in the cache because they’re not being accessed.

5.4 Efficiency: Why Lazy Expiry is a Good Tradeoff

Lazy expiry avoids the overhead of constant background scanning or timers, which can be complex and resource-heavy.

Instead, expiry is checked on-demand — only when clients ask for entries.

Most workloads naturally touch popular entries frequently, so stale entries tend to be detected and removed rather quickly.

Periodic manual cleanup covers the corner cases of long-forgotten expired entries.

5.5 How Eviction and TTL Interact

Consider insertions and expirations together:

- When you insert an entry, if the cache is full, eviction precedes insertion.

- Expired entries behave like they don’t exist during

getandhas, but they still occupy space until removed. - Therefore, eviction is triggered based on the size counting actual entries present, some of which may have expired but not yet cleaned up.

This means cache might evict live entries even if expired entries exist but haven’t been cleaned. Calling cleanup() regularly helps reclaim space from expired data and reduces unnecessary live evictions.

5.6 Recency Tracking for LRU: How Order is Maintained

For eviction policies like LRU:

- The planned implementation will move accessed/updated entries to the most recent position in Map’s iteration order.

- This will make it straightforward to find the least recently used (the first key in the

Map) for eviction when needed. - The goal is to achieve O(1) amortized complexity for lookups and reordering without needing a custom linked list.

5.7 Practical Advice

- Keep TTLs balanced: Not too short or too long — this affects how often expired data builds up, causing evictions.

- Call

cleanup()in long-running processes: Helps clear expired entries and reduce cache size. - Choose eviction policy aligned with your workload: For example, LRU for usage-based caching, FIFO for simple eviction needs.

- Enable stats: Monitor hits, misses, and evictions to tune cache configuration.

5.8 Understanding the Combined Power of TTL and Eviction

The pairing of TTL and eviction creates a self-managing cache that maintains freshness and memory limits without manual intervention.

By leveraging JavaScript’s native Map iteration order and timestamp comparison, these mechanisms stay elegant, fast, and predictable.

This design philosophy ensures that runtime-memory-cache remains lightweight while providing powerful caching capabilities.

6. Practical Usage Patterns with runtime-memory-cache: Real-World Examples and Tips

With a good understanding of the cache mechanics, it’s time to explore how to apply runtime-memory-cache in typical backend scenarios to boost speed, reduce load, and streamline data access.

I’ll walk you through some common use cases and best practices that emphasize how straightforward and effective this cache can be.

6.1 Basic Caching of API or Database Calls

One of the most frequent reasons to introduce a cache is to avoid expensive repetitions of API requests or database queries.

Example: Cache user profile data to prevent repeated fetches.

// Assume this is your cache instance:

const cache = new RuntimeMemoryCache({ ttl: 60000, maxSize: 5000, evictionPolicy: 'LRU', enableStats: true });

async function getUserProfile(userId: string) {

const cacheKey = `user:${userId}`;

// Try cache first

let profile = cache.get(cacheKey);

if (profile) {

return profile; // Cache hit!

}

// If miss, fetch from backend API or DB

profile = await fetchUserFromDB(userId);

// Cache the result with default TTL

cache.set(cacheKey, profile);

return profile;

}

Why use this pattern?

- Dramatically reduces DB/API calls for popular users.

- Speeds up response time for subsequent requests.

- Adheres to your TTL and eviction policies automatically.

6.2 Sessions and Authentication Tokens

For apps that manage sessions or tokens, caching session data in-memory is a great strategy for ultra-fast lookups.

const sessionCache = new RuntimeMemoryCache({ ttl: 30 * 60 * 1000, maxSize: 10000 }); // 30-min TTL

function storeSession(sessionId: string, sessionData: any) {

sessionCache.set(sessionId, sessionData); // Stores with default TTL

}

function getSession(sessionId: string) {

return sessionCache.get(sessionId);

}

function invalidateSession(sessionId: string) {

sessionCache.del(sessionId);

}

Here, the cache automatically cleans up expired sessions, helping control memory and avoid stale state.

6.3 Rate Limiting Per User or Client

Another common use case is rate limiting, where you track the number of requests a client makes in a given timeframe.

const limiterCache = new RuntimeMemoryCache({ ttl: 60 * 1000, maxSize: 10000, evictionPolicy: 'LRU', enableStats: true });

function rateLimit(userId: string) {

const key = `rate-limit:${userId}`;

let entry = limiterCache.get(key);

if (!entry) {

entry = { count: 1 };

} else {

entry.count += 1;

}

if (entry.count > 100) {

throw new Error('Rate limit exceeded');

}

limiterCache.set(key, entry);

}

What’s happening?

- Cache entries track request counts with a 1-minute TTL.

- Rate limits reset automatically as entries expire.

- Eviction ensures the cache remains bounded.

6.4 Manual Cleanup Usage

For long-running services that intermittently cache a lot of data, I recommend scheduling periodic cleanup calls to remove expired entries and free memory.

setInterval(() => {

cache.cleanup();

console.log('Cache cleanup performed');

}, 5 * 60 * 1000); // Every 5 minutes

6.5 Monitoring Cache Effectiveness with Stats

To optimize and debug your cache usage, monitor its statistics:

setInterval(() => {

const stats = cache.getStats();

console.log(`Cache stats: Hits=${stats.hits}, Misses=${stats.misses}, Evictions=${stats.evictions}`);

}, 30000); // Every 30 seconds

Use these logs to fine-tune TTL, max size, or eviction strategy if you observe poor hit rates or excessive evictions.

6.6 Integrating with Express Middleware

Here’s a simple example of caching API responses inside an Express route handler:

import express from 'express';

const app = express();

const cache = new RuntimeMemoryCache({ ttl: 60000, maxSize: 2000, evictionPolicy: 'LRU' });

app.get('/product/:id', async (req, res) => {

const id = req.params.id;

let product = cache.get(id);

if (!product) {

product = await fetchProductFromDB(id);

cache.set(id, product);

}

res.json(product);

});

app.listen(3000, () => console.log('App started.'));

This pattern caches product data, making subsequent calls extremely fast.

6.7 Advanced: Memoizing Expensive Functions

You can use runtime-memory-cache as a memoization store to cache results of CPU-intensive functions:

function memoize<T, R>(fn: (arg: T) => R, cache: RuntimeMemoryCache<T, R>): (arg: T) => R {

return (arg: T) => {

const cached = cache.get(arg);

if (cached !== undefined) {

return cached;

}

const result = fn(arg);

cache.set(arg, result);

return result;

};

}

// Example usage:

const expensiveComputationCached = memoize(expensiveComputation, new RuntimeMemoryCache({ ttl: 60000 }));

6.8 Tips for Effective Caching

- Use meaningful, unique cache keys to avoid collisions.

- Choose eviction policies based on your access patterns (see Section 2).

- Tune TTL values carefully to balance freshness and cache hit rate.

- Keep an eye on stats to catch unexpected cache behavior.

- Remember cleanup for cleanup of expired entries when necessary.

7. Performance Benchmarks and Profiling: Seeing runtime-memory-cache in Action

Understanding theoretical design is crucial, but developers really want to see how fast and efficient a cache performs under real-world and stress conditions. In this section, I’ll share insights on measuring performance, some benchmarks with runtime-memory-cache, and tips to profile and tune your cache.

7.1 Why Benchmark Your Cache?

- Validate speed: Confirm that get/set operations are truly O(1) and fast at scale.

- Measure latency: See how quick your cache responds even at heavy loads.

- Memory footprint: Monitor how much RAM your cache consumes with growing data.

- Eviction behavior: Understand how eviction impacts throughput and hit rate.

7.2 Simple Benchmarking Script

Here’s a straightforward benchmark in Node.js to test set and get throughput:

import RuntimeMemoryCache from 'runtime-memory-cache';

const N = 100000;

const cache = new RuntimeMemoryCache({ ttl: 60000, maxSize: 50000, evictionPolicy: 'LRU' });

console.time('set');

for (let i = 0; i < N; i++) {

cache.set(`key${i}`, { value: i, timestamp: Date.now() });

}

console.timeEnd('set');

console.time('get');

for (let i = 0; i < N; i++) {

cache.get(`key${i}`);

}

console.timeEnd('get');

Typical results:

- Set operations can exceed hundreds of thousands per second.

- Get operations often match or exceed set performance.

- Low latency means minimal bottleneck within request pipelines.

7.3 Large Data Handling and Memory Use

- As you increase the size or complexity of cached values, memory usage grows.

- Use

getMemoryUsage()to see estimated bytes and detect growth trends. - Always tune

maxSizeto reflect your application’s memory constraints.

Example:

const memUsage = cache.getMemoryUsage();

console.log(`Estimated memory usage: ${memUsage.estimatedBytes / 1024} KB`);

7.4 Profiling Tools

- Use Node.js’ built-in profiler (

--inspect,--prof) and heap snapshots to observe memory. - Use external tools like Chrome DevTools or

clinicfor CPU/memory profiling. - Track stats output over time to identify cache inefficiencies.

8. Advanced Features, Extensibility, and Future Plans: Growing with Your Needs

While the core of runtime-memory-cache focuses on simplicity and reliability, a powerful cache should be adaptable as your backend evolves. In this section, I want to show you some of the more advanced options in the package, share how you can extend it for upcoming needs, and give a sneak peek into features on my roadmap—including support for more eviction policies and integration hooks.

8.1 Custom Per-Entry TTL (Time-To-Live)

One rarely found but incredibly useful feature is the ability to set a custom TTL for each cache entry:

cache.set('user:admin', someObj, 10 * 60 * 1000); // 10-minute expiry

cache.set('promo:summer', promoObj, 24 * 60 * 60 * 1000); // 24-hour expiry

Why is this so useful?

- You can strategically cache fast-changing data (short TTL) alongside slow-changing or near-static data (long TTL) in the same cache, greatly improving efficiency.

- No need for multiple cache instances just for different expiry rules.

8.2 Stats for Observability & Automation

Many built-in caches in other platforms are black boxes—runtime-memory-cache is purposely transparent:

- Use

enableStats: trueto gather real-time metrics. - Automate tuning: In future versions, you’ll be able to adjust cache parameters at runtime (e.g., increasing maxSize if hit rate is too low).

- Stats-driven control is foundational for adaptive, production-ready workloads.

8.3 Modular Eviction Policies and the Road to LFU (and Beyond)

At present, FIFO and LRU are available (see Section 2). But I recognize that as your app’s load and access patterns shift, you might crave even more control. That’s why I’m architecting the next iterations with modular eviction logic.

- LFU (Least Frequently Used): Coming soon! This option will allow the cache to evict rarely used entries, excellent for workloads where “popularity” matters more than recency.

- ARC, custom hybrid strategies: As the open source community grows, adding new eviction modules (or plugging in your own!) will become straightforward.

- If you’re eager to contribute or have a use-case to discuss, I’d love to connect!

8.4 Planned Advanced Features

Here’s a taste of what else I plan to add (and encourage others to help shape):

- Event hooks: Subscribe to onEvict, onSet, onHit, etc.—perfect for integrating cache hits/misses into wider analytics or alerting systems.

- Async fallback/getters: Trigger a backend fetch+cache-fill on cache miss (future).

- Batch operations: Set, get, delete multiple keys at once (bulk operations for efficiency).

- Integration adapters: Easy plugins for popular web-framework middlewares, like Express or Koa.

- Type inference improvements: Even tighter TypeScript integration for generics, inferred return types, and compile-time cache key safety.

8.5 Example: Extending runtime-memory-cache for Your App

Since the codebase is lightweight and clearly structured, it’s easy to subclass or wrap if you want to experiment:

class LoggingCache<K, V> extends RuntimeMemoryCache<K, V> {

set(key: K, value: V, ttl?: number) {

console.log('Set', key, value);

super.set(key, value, ttl);

}

}

You can also fork and add your own eviction module, e.g. for LFU, by following the patterns in the code and the open contribution guidelines.

8.6 Why Extensibility Matters

No two applications have the same access patterns, data volatility, or performance envelope. A cache that grows with your needs—be that more eviction options, advanced hooks, or deeper introspection—protects your investment and allows experimentation. Plus, as the author, I want to empower the community to guide the roadmap.

9. Debugging, Troubleshooting, and Common Pitfalls with runtime-memory-cache

While caching brings great performance benefits, it also introduces complexity that can lead to subtle bugs or inefficiencies if not managed carefully. Based on my experience building and maintaining runtime-memory-cache, here are some practical tips and common gotchas to watch out for.

9.1 Common Issues and How to Detect Them

Cache Misses More Than Expected

- Symptoms: Your app seems to hit the backend frequently without utilizing the cache well.

- Possible Causes:

- TTL is too short, so entries expire before reuse.

maxSizeis too small, causing aggressive evictions.- Cache keys are inconsistent or incorrect (e.g., variable parts in keys not normalized).

- Entries are accidentally deleted or not set correctly.

- How to Verify:

- Enable stats with

enableStats: trueand monitorhitsvsmisses. - Log cache keys when

setorgetis called in development. - Ensure keys are deterministic and consistent.

- Enable stats with

Memory Bloat and Increased Evictions

- Symptoms: Eviction count is high, and memory usage grows unexpectedly.

- Possible Causes:

- Very large or complex objects cached.

- Cache is filled with many unique keys with little reuse.

- Expired entries are not cleaned up promptly (rare with lazy expiry but possible).

- How to Detect:

- Use

getMemoryUsage()regularly to watch cache size. - Check stats for high eviction rates.

- Use

- Remedies:

- Tune

maxSizeand TTL values. - Consider manual or periodic

cleanup(). - Avoid caching extremely large data chunks; cache references or summarized data instead.

- Tune

Stale Data Issues

- Symptoms: Users see outdated or incorrect data served from cache.

- Possible Causes:

- TTL too long for the type of volatile data.

- Cache not cleared or invalidated when underlying data changes.

- How to Mitigate:

- Use appropriately timed TTL.

- Manually

del()cache keys when you know data changed. - Employ cache versioning or key namespaces to force refresh.

9.2 Best Practices for Reliable Cache Usage

- Meaningful Keys: Always use unique, normalized keys to avoid collisions and unexpected misses.

- Balance TTL and maxSize: Neither too aggressive nor too lax; monitor stats to adjust.

- Enable Stats Early: Give yourself visibility from the start.

- Schedule Cleanups for Long-Running Apps: Prevent stale data buildup.

- Test Cache Behavior Thoroughly: Write unit tests covering expiry, eviction, and concurrent access.

9.3 Debug Logging

If deep debugging is needed, wrapping or subclassing the cache with logs at key points (such as inserts, evictions) can help:

class DebugCache<K, V> extends RuntimeMemoryCache<K, V> {

set(key: K, value: V, ttl?: number) {

console.log(`set called with key: ${key}`);

super.set(key, value, ttl);

}

get(key: K) {

console.log(`get called with key: ${key}`);

return super.get(key);

}

}

9.4 Handling Concurrency

Although JavaScript is single-threaded in Node.js, asynchronous operations might cause race conditions:

- Two parallel requests might both miss and set the same cache key, causing duplicate backend fetches.

- Consider locking or “in-flight request” tracking externally if this matters.

- Alternatively, use the cache’s stats to spot frequent misses on hot keys, and optimize.

9.5 FAQ and Troubleshooting Summary

| Problem | Common Cause | Suggested Fix |

|---|---|---|

| Too many cache misses | Short TTL, small cache, bad keys | Increase TTL, maxSize, fix cache keys |

| Memory usage growth | Large cached objects, no cleanup | Tune sizes, run manual cleanup periodically |

| Users see stale data | TTL too long, no invalidation | Shorten TTL, delete keys on data change |

| Unexpected evictions | Too small cache size | Increase maxSize |

| Concurrency issues | Parallel miss+set race | Implement external locks or dedupe requests |

This section equips you to spot, diagnose, and fix common caching issues effectively.

10. Integrations with Popular Node.js Frameworks

Integrating runtime-memory-cache into your favorite Node.js frameworks like Express, Koa, or Fastify is straightforward, and let’s explore practical examples to help you get started fast.

10.1 Using runtime-memory-cache with Express

Express is one of the most popular web frameworks for Node.js. Below is a simple example demonstrating how to use runtime-memory-cache to cache API responses in Express route handlers:

import express from 'express';

import RuntimeMemoryCache from 'runtime-memory-cache';

const app = express();

const cache = new RuntimeMemoryCache({

ttl: 60000, // 1-minute TTL

maxSize: 1000,

evictionPolicy: 'LRU',

enableStats: true

});

app.get('/user/:id', async (req, res) => {

const userId = req.params.id;

const cacheKey = `user:${userId}`;

let user = cache.get(cacheKey);

if (user) {

console.log('Cache hit');

return res.json(user);

}

console.log('Cache miss - fetching from DB');

// Simulate DB call or fetch from external service

user = await fetchUserFromDatabase(userId);

cache.set(cacheKey, user);

return res.json(user);

});

app.listen(3000, () => console.log('Server running on port 3000'));

10.2 Integrating with Koa

Similar approach for Koa or other middleware-based frameworks:

import Koa from 'koa';

import Router from '@koa/router';

import RuntimeMemoryCache from 'runtime-memory-cache';

const app = new Koa();

const router = new Router();

const cache = new RuntimeMemoryCache({ ttl: 300000, maxSize: 2000, evictionPolicy: 'FIFO' });

router.get('/products/:id', async (ctx) => {

const id = ctx.params.id;

const cached = cache.get(id);

if (cached) {

ctx.body = cached;

return;

}

const product = await fetchProduct(id);

cache.set(id, product);

ctx.body = product;

});

app.use(router.routes());

app.listen(4000);

10.3 Using with Fastify

Fastify users benefit from similarly clean integration:

import fastify from 'fastify';

import RuntimeMemoryCache from 'runtime-memory-cache';

const app = fastify();

const cache = new RuntimeMemoryCache({ ttl: 120000, maxSize: 5000, evictionPolicy: 'LRU' });

app.get('/session/:sessionId', async (request, reply) => {

const sessionId = request.params.sessionId;

let session = cache.get(sessionId);

if (session) {

reply.send(session);

return;

}

session = await fetchSession(sessionId);

cache.set(sessionId, session);

reply.send(session);

});

app.listen(3000);

10.4 Middleware for Caching Responses

You can wrap your cache logic into middleware to automatically cache and serve responses:

function cacheMiddleware(cache: RuntimeMemoryCache, ttl?: number) {

return async (req, res, next) => {

const key = req.originalUrl;

const cachedBody = cache.get(key);

if (cachedBody) {

res.send(cachedBody);

return;

}

// Override send to cache the response body

const originalSend = res.send.bind(res);

res.send = (body) => {

cache.set(key, body, ttl);

return originalSend(body);

};

next();

};

}

Then use middleware in Express:

app.use(cacheMiddleware(cache, 60000));

11. Extending and Contributing to runtime-memory-cache

I designed runtime-memory-cache to be lightweight and focused but also open for growth. Whether you want to add new features, fix bugs, or customize the cache for your needs, here’s how you can extend or contribute to the project.

11.1 Understanding the Codebase Structure

The project is written in TypeScript and organized clearly for readability and maintainability:

- src/ contains the main implementation files.

- test/ holds unit tests covering core and edge cases.

- The eviction logic, TTL handling, statistics, and utility functions are modularized.

- Typed interfaces ensure type safety and help with code completion.

This structure makes it easy to find the portion you want to improve.

11.2 How to Add New Eviction Policies

Currently, the cache supports FIFO and LRU, but you can extend this by:

- Defining a new eviction strategy module that implements required hooks (e.g., how to update usage order, how to select keys to evict).

- Adjusting the main cache class to accept your custom strategy or toggling new policy strings.

- Writing unit tests to verify correctness and performance.

For example, if you want to add LFU (Least Frequently Used):

- Track access counts per key.

- On eviction, remove the key with the lowest frequency count.

- Periodically decay counts if needed to avoid stale frequency data.

11.3 Pull Requests and Issues

I welcome contributions via GitHub. To contribute:

- Open an issue describing your proposed change or bug.

- Fork the repo, make your changes in a branch.

- Submit a pull request (PR) with clear description and tests.

- I review and collaborate on improvements before merging.

Make sure to run and add tests — coverage is important.

11.4 Feature Ideas I’m Open to Receiving

- More eviction policies (e.g., LFU, ARC).

- Async-aware cache getters/setters.

- Bulk operations for better batch performance.

- Cache event hooks for monitoring.

- Framework-specific middlewares or helpers.

- Detailed benchmarks and profiling scripts.

11.5 Tips for Maintaining Your Fork or Variant

If your cache needs diverge significantly, feel free to fork and adapt but consider merging improvements back to keep a strong, community-driven core project.

11.6 Keeping Up With Updates

I strive to maintain compatibility with the latest Node.js and TypeScript versions and welcome feedback on performance or API ergonomics. Star the GitHub repo to get notified of updates and help spread the word.

Summary:

Extensibility is key to a vibrant cache library. With clear code and modular eviction design, runtime-memory-cache is ready for you to customize or contribute to. Whether adding LFU, better stats, or integrations, your input helps the entire community